- Introduction

- Designing Security Protocols for Cryptographic Change

- Algorithm Negotiation, Transitions, and Security Trade-offs

- From Protocol Agility to Implementation Reality

- Abstraction, Modularity, and Configuration

- Treating Cryptographic Risk as an Organizational Responsibility

- The Crypto Agility Strategic Plan

- Cryptographic Rigidity in Hardware and Long-Lived Systems

- Shifting Cryptography Outside the System

- The Full Crypto Agility Strategy

- How can Encryption Consulting Help?

- Conclusion

Introduction

Cryptography is not a fixed asset. Algorithms that are considered secure today will inevitably weaken as new attacks emerge, computing power increases, and new computational realities such as quantum computing alter long-standing threat models. Systems that treat cryptography as static and hard-code it into designs, protocols, or infrastructure ultimately accumulate technical debt that makes secure evolution difficult or even impossible.

To remain trustworthy over time, organizations must be able to change cryptographic algorithms, parameters, and implementations without disrupting operations or degrading security. For this, organizations should be “crypto-agile”, which is the capability of a system, organization, or ecosystem to rapidly and safely adapt cryptographic algorithms, parameters, protocols, and implementations in response to:

- evolving threats and vulnerabilities,

- changing regulatory or compliance requirements,

- new computational environments and constraints

NIST CSWP 39 “Considerations for Achieving Crypto Agility” addresses this challenge through the concept of cryptographic agility. Rather than framing agility as a single feature or configuration switch, the document presents it as a coordinated capability that spans technical design, operational processes, governance, and hardware constraints. Together, these dimensions define whether an organization can respond to cryptographic change in a controlled, timely, and secure manner.

The next section of this article examines how these design choices can either support cryptographic flexibility or lock systems into legacy algorithms, and why protocol-level agility is essential for secure and manageable cryptographic transitions.

Designing Security Protocols for Cryptographic Change

Security protocols are the foundation upon which cryptography is applied at scale, and they are often the least flexible component in an IT ecosystem. Once a protocol is standardized and widely deployed, changing its cryptographic behavior becomes extremely difficult. NIST emphasizes that cryptographic agility must be built into protocols from the outset, because protocols are long-lived and must remain secure across decades of cryptographic evolution.

A key insight is that protocols do not rely on individual algorithms but on sets of algorithms that collectively deliver security services, including:

- Authentication,

- Confidentiality,

- Integrity, and

- Key establishment

These sets are commonly expressed as cipher suites or equivalent constructs. From an agility perspective, this means that replacing or deprecating a single algorithm often has cascading effects across the protocol. Poorly designed protocols tightly couple cryptographic choices with message formats, key sizes, or control flows, making future transitions disruptive or even infeasible.

A critical design consideration is the mechanism used to identify the cryptographic algorithm or cipher suite in use. These identifiers may be:

- explicitly carried within the protocol

- managed by an external negotiation mechanism

- inferred indirectly from the protocol version number

Furthermore, it is highly desirable for standards developing organizations (SDOs) to be able to revise mandatory-to-implement algorithms without modifying the base security protocol specification. To achieve this goal, some SDOs publish a base security protocol specification and a companion document describing the supported algorithms, allowing one document to be updated without necessarily modifying the other.

Protocol designers generally choose between two primary identification approaches:

-

Individual Algorithm Identifiers:

Protocols like IKEv2 often negotiate algorithms using a separate identifier for each specific cryptographic function. While this provides flexibility, it places a burden on implementations to determine which combinations are acceptable during session establishment. -

Cipher Suite Identifiers:

Protocols like TLS 1.3 use a single identifier for a “cipher suite”, a collective set of algorithms for services like encryption, authentication, and key establishment.

This approach ensures complete specifications but can lead to a “combinatoric explosion” when many combinations are possible. Regardless of the approach, consistency is vital for protocol designers. Furthermore, identifiers should ideally specify not just the algorithm, but also key sizes and other parameters to avoid the interoperability problems that arise when these details are left flexible or unnegotiated.

While replacing a single algorithm can have cascading effects, the inclusion of robust algorithm identifiers serves as the primary technical mechanism for managing these transitions seamlessly

Another challenge arises from implicit cryptographic dependencies. These are assumptions embedded in protocol logic that depend on specific algorithm properties, such as fixed key sizes, deterministic behavior, or performance characteristics. Although not always visible in the protocol specification, these dependencies can silently prevent the introduction of new algorithms later.

- A common example is early versions of TLS and SSL, where protocol message structures and handshake logic implicitly assumed the use of RSA key exchange with fixed key sizes.

- Fields such as the ClientKeyExchange message were designed around RSA-encrypted premaster secrets of predictable length, and handshake timing assumed relatively fast, deterministic operations.

- These assumptions complicated the later introduction of alternative key exchange mechanisms, such as Diffie–Hellman and Elliptic Curve Diffie–Hellman, requiring substantial protocol redesign rather than simple algorithm substitution.

NIST stresses that crypto-agile protocols should minimize such hidden assumptions and isolate cryptographic functionality from non-cryptographic protocol logic wherever possible.

By designing protocols that treat cryptographic algorithms as replaceable components rather than permanent fixtures, organizations can ensure that protocol evolution remains possible. This design philosophy naturally leads to the next critical consideration of how protocols negotiate and transition between cryptographic options securely. Let us explore the next section to understand this.

Algorithm Negotiation, Transitions, and Security Trade-offs

Algorithm negotiation is one of the most powerful mechanisms for enabling cryptographic agility in security protocols. It allows communicating parties to dynamically select algorithms based on mutual support, enabling gradual migration to stronger cryptography without breaking compatibility.

For example, during a TLS handshake:

- The client sends a list of supported cipher suites.

- The server selects the strongest mutually supported suite, such as upgrading from AES-128-CBC to AES-256-GCM.

This approach allows organizations to:

- Gradually migrate to stronger cryptography

- Deprecate weaker algorithms over time

- Maintain secure, uninterrupted operations

All of this is achieved without modifying the protocol itself, preserving compatibility across systems. However, NIST emphasizes that algorithm negotiation is also a potential source of risk if not properly secured. One of the most serious threats is the downgrade attack, where an adversary interferes with negotiation messages to force the use of weaker algorithms. This can happen when:

- Negotiation messages are unauthenticated

- Protocols implement fallback or retry logic that accepts older algorithms

- Algorithm selection is not cryptographically bound to the handshake transcript

To prevent this, negotiation mechanisms must be cryptographically protected, and the final algorithm selection must be tightly bound to the protocol’s integrity guarantees. Without these protections, agility mechanisms can undermine security rather than strengthen it.

In simpler terms, hybrid mechanisms (for both signatures and key-sharing) act as a “safety net” during the transition to post-quantum cryptography (PQC). By combining traditional math with new quantum-resistant math, these schemes ensure that your data stays protected even if the new algorithms aren’t fully ready or if a quantum computer breaks the old ones. The core idea is that as long as at least one of the combined algorithms remains strong, the overall system stays secure.

However, there are trade-offs to using this approach:

- Increased Complexity: They make security protocols harder to build and much more difficult for experts to analyze.

- A Test of Agility: Successfully using hybrid schemes proves a protocol is truly “crypto-agile” because it shows the system is flexible enough to manage multiple sets of security rules (cipher suites) at the same time.

Another important consideration is the trade-off between flexibility and complexity. Supporting a large number of algorithms increases agility but also expands the attack surface and implementation complexity. Conversely, supporting too few algorithms makes the protocol brittle in the face of cryptographic change. NIST frames crypto agility as a balancing act, as protocols should support enough flexibility to evolve while maintaining a manageable and well-understood security posture.

Therefore, crypto agility is a long-term protocol responsibility rather than short-term optimization. Protocols must support the full lifecycle of cryptographic algorithms from adoption to deprecation, without forcing disruptive redesigns. By enabling secure negotiation, minimizing hidden dependencies, and planning for inevitable cryptographic change, protocols can remain secure and interoperable even as the underlying cryptography evolves.

While this section defines what cryptographic change is allowed, the next section addresses whether it is achievable in practice. It shifts focus to implementations, where cryptographic rigidity most commonly emerges through hard-coded algorithms and tightly coupled application logic. NIST demonstrates that abstraction, modular cryptographic services, and configuration-driven control are essential for translating protocol-level flexibility into operational reality.

From Protocol Agility to Implementation Reality

Cryptographic agility must be built into security protocols, but protocol support alone is not enough. Even if a protocol allows multiple algorithms or secure negotiation, real-world systems can still be cryptographically rigid if their implementations hard-code cryptographic choices.

NIST explains that implementations often embed cryptographic assumptions deep within application logic, tying algorithms, key sizes, or modes of operation directly to code paths. When this happens, changing cryptography becomes a costly and risky engineering effort rather than a manageable operational task. In practice, this can look like fixed cipher suites hard-coded into the application, hard-coded key sizes, or algorithm-specific error handling that breaks if parameters change.

As a result, organizations frequently delay updates even when vulnerabilities are known, simply because the implementation burden is too high.

To address this, the separation of concerns will be a core principle of crypto-agile implementations. Applications should not hard-code specific cryptographic algorithms. Instead, they should request cryptographic operations through abstracted services or APIs, allowing the underlying implementation to select, negotiate, or upgrade algorithms without requiring changes to the application itself.

NOTE: Abstraction alone is not sufficient; therefore, it must be paired with policy enforcement, governance, and compliance controls to ensure that algorithm choices, key management practices, and cryptographic transitions adhere to organizational standards and regulatory requirements. Together, these measures extend the protocol-level agility discussed earlier into practical, auditable, and deployable systems.

Abstraction, Modularity, and Configuration

This section explores how crypto agility is practically achieved within various implementation environments, moving from high-level software down to fixed hardware.

Crypto APIs and Libraries

A Cryptographic Application Programming Interface (crypto API) acts as a buffer, allowing applications to request security services (like digital signatures or hashing) without needing to manage the underlying mathematical details. This allows developers to switch between algorithms (e.g., moving from AES-CCM to AES-GCM) by making the same API calls, provided the parameters are handled correctly.

Operating System Kernels

While software libraries usually run in “user space” and are easier to update, protocols like IPsec often run in the OS kernel. Agility in the kernel is more difficult because algorithms are often fixed when the kernel is built, though designers can improve this by using loadable kernel modules.

Cloud-Native Environments

Modern distributed systems can use service meshes or sidecar proxies to centralise cryptographic policy enforcement. This abstracts the crypto logic away from individual microservices, making it easier to update libraries and rotate keys with minimal disruption.

Hardware and Embedded Systems

These represent the most significant challenge for agility. In many embedded systems, algorithms are “compiled in” at the time of manufacture to meet strict memory and timing requirements. Similarly, in hardware (like HSMs, TPMs, or specialized chips), the logic is often immutable once the chip leaves the factory. While FPGAs provide some flexibility for reconfiguration, most hardware requires long-term planning or physical replacement to change algorithms.

Legacy Systems

For older, “monolithic” systems where the original code cannot be safely modified, organizations can use a “crypto gateway” or a “bump-in-the-wire”. This architectural solution intercepts traffic and performs modern cryptographic functions externally, essentially “wrapping” the vulnerable legacy crypto in a secure layer.

With protocols and implementations enabling cryptographic change, the next section elevates the discussion to organizational responsibility and strategic planning. NIST emphasizes that cryptographic agility cannot rely solely on technical excellence; it must be managed as an enterprise risk. Through cryptographic inventories, governance structures, and a formal Crypto Agility Strategic Plan for Managing Organizations’ Crypto Risks, organizations can ensure that cryptographic transitions are anticipated, prioritized, and coordinated rather than reactive.

Treating Cryptographic Risk as an Organizational Responsibility

After addressing cryptographic agility at the protocol and implementation levels, let us understand why cryptographic risk must be managed as an enterprise concern. Cryptography underpins authentication, data protection, secure communications, and trust relationships across systems. When cryptographic mechanisms fail or are deprecated, the impact is rarely isolated, as it often affects multiple services, partners, and operational workflows simultaneously.

NIST emphasizes that organizations frequently underestimate cryptographic risk because cryptography is embedded deep within systems and remains invisible during normal operations. As a result, cryptographic transitions are often reactive, triggered by urgent vulnerabilities or external mandates. This reactive approach increases operational disruption and security risk, particularly when cryptographic dependencies are poorly understood.

To move away from this pattern, let us understand the importance of explicit governance and visibility. Organizations must understand where cryptography is used, how it is implemented, and which systems depend on it. A cryptographic inventory becomes the foundation for understanding and managing cryptographic assets, providing a comprehensive view of algorithms, key sizes, protocols, libraries, and hardware components in use.

With this visibility, organizations can assess the impact of proposed changes, prioritize upgrades, plan key rotations, and anticipate dependencies across systems. Without such an inventory, even well-designed and well-implemented crypto-agile systems cannot be effectively managed at scale, leaving organizations unable to respond efficiently to vulnerabilities, deprecations, or evolving threat landscapes.

This organizational framing naturally connects us back to the previous two sections: protocol and implementation agility only deliver value when the organization is prepared to recognize and act on cryptographic risk.

The Crypto Agility Strategic Plan

Building on this organizational perspective, this section introduces the Crypto Agility Strategic Plan for Managing Organizations’ Crypto Risks. Rather than focusing on specific algorithms or technologies, this plan establishes processes that allow cryptographic change to occur in a controlled, predictable, and coordinated manner.

NIST explains that an effective strategic plan defines how cryptographic decisions are made, who is responsible for them, and how transitions are prioritized and executed. This includes integrating cryptographic considerations into system lifecycles, procurement decisions, and risk management processes.

Supplier and third-party dependencies represent a significant cryptographic agility risk, so decisions must account for vendor support, update practices, and the agility of third-party components. By addressing these factors, organizations can ensure that cryptographic transitions are anticipated and planned, rather than executed under crisis conditions.

The strategic plan also aligns cryptographic agility with existing cybersecurity risk management frameworks. This alignment allows cryptographic risks to be assessed alongside other enterprise risks, ensuring leadership visibility and resource allocation, enabling prioritization and trade-off decisions during transitions.

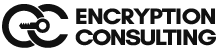

Crypto Agility (Overall Goal)

- Ensures cryptographic mechanisms can be quickly adapted to new threats, regulations, or technology changes.

- Operates as a continuous lifecycle, not a one-time upgrade.

Governance

- Defines cryptographic direction through standards, regulations, threat intelligence, business needs, and policies.

- Aligns cryptographic decisions with organizational and compliance requirements.

Assets

- Maintains visibility of all crypto-dependent assets such as code, applications, libraries, protocols, files, and systems.

- Serves as the foundation for managing cryptographic risk.

Management Tools

- Enable discovery, assessment, configuration, and enforcement of cryptography.

- Provide automated insight into crypto usage, vulnerabilities, logging, and zero-trust controls.

Data-Centric Risk Management

- Centralizes crypto data in an information repository.

- Uses risk analysis and prioritization to measure exposure and business impact.

- Delivers dashboards, reports, and KPIs for informed decision-making.

Risk Response

- Mitigation: Reduce risk through configuration or compensating controls.

- Migration: Replace weak or non-compliant cryptography with stronger alternatives.

An organization with a mature crypto agility strategy can identify which systems rely on aging cryptographic algorithms, prioritize those with the highest risk exposure, and schedule transitions during normal maintenance cycles. This preparedness significantly reduces disruption while preserving security and interoperability.

1. Protocol Design: Explicit Identification

Crypto agility is significantly easier to achieve when security protocols include explicit algorithm or cipher suite identifiers. Without these, introducing new algorithms often requires a costly and slow protocol version change. Hybrid mechanisms (combining traditional and post-quantum algorithms) serve as a critical “safety net” during transitions, ensuring security remains intact as long as at least one component algorithm is strong.

2. Implementation: Abstraction and Modularity

The primary technical mechanism for agility is the separation of application logic from cryptographic math.

- Crypto APIs and Providers: Applications should use stable APIs to request services from interchangeable Cryptographic Service Providers (CSPs).

- Configuration over Code: Updating cryptography should be a controlled operational activity driven by configuration and policy updates rather than disruptive code redevelopment.

3. Mature Strategy: Inventory and Visibility

A mature crypto agility strategy (Tier 3 – Repeatable and Tier 4 – Adaptive) moves from reactive fixes to proactive risk management. This includes:

- Complete Asset Inventory: Adopting an asset-centric approach to catalogue all cryptographic uses across software, hardware, data, and certificates.

- Dependency and Supply Chain Visibility: Mature organizations use automated discovery tools to gain full visibility into the technology supply chain. This allows them to identify specific algorithms and components within third-party products and determine if they can be updated modularly.

- Continuous Monitoring: At the highest maturity level, agility is monitored in near real-time, ensuring that policies adapt dynamically to the evolving threat landscape.

Together, these strategic practices complete the crypto agility story. Protocols enable change, implementations make it possible, and strategic planning ensures it happens deliberately and safely. With this foundation, organizations can treat cryptographic change not as an emergency, but as a managed and ongoing capability.

Now moving on, the next section addresses the hardest reality of cryptographic agility, i.e., “not all systems can be easily changed”. Hardware-based cryptography and long-lived legacy systems often lack update paths, creating unavoidable rigidity.

Building on the preparedness established, NIST introduces architectural mitigation strategies such as external cryptographic controls and gateways that allow modern cryptography to be applied without modifying legacy components. The following section explains how these strategies are presented as mitigation when agility is constrained.

Cryptographic Rigidity in Hardware and Long-Lived Systems

1. Hardware Limitations and Resource Constraints

Hardware implementation is inherently limited by capacity. Many algorithms cannot be implemented on a single platform, and while optimization efforts such as accelerator reuse exist, further research is needed to support transitions from traditional public-key cryptography to post-quantum cryptography (PQC). Future cryptographic algorithm designs must account for these hardware resource limitations to remain practical and deployable.

2. Long Operational Lifetimes

Hardware cryptographic modules, including HSMs, embedded controllers, and secure elements often have very long operational lifetimes, frequently spanning decades. These lifetimes can exceed the anticipated security lifetime of the algorithms, key sizes, and protocol constructions initially selected, creating a fundamental tension between system durability and the ability to evolve cryptography over time.

3. Constrained Environments

Many hardware platforms operate in constrained environments that inherently limit cryptographic flexibility. Embedded devices and secure elements may rely on fixed-function cryptographic hardware, have limited memory or processing capacity, and support only a narrow set of hardcoded algorithms. Update mechanisms, if present, are usually tightly restricted, making it difficult to introduce new algorithms or modify cryptographic behavior after deployment.

4. Compliance and Certification Constraints

Hardware security modules and similar devices often operate under strict compliance and certification requirements. Standards such as FIPS 140 or Common Criteria validate cryptographic primitives, key management logic, and firmware. Any change to these components can invalidate certification or require costly and time-consuming recertification, making operational cryptographic updates technically possible but often infeasible.

5. Challenges with Legacy Systems

Legacy systems frequently use cryptography that was considered secure at deployment but is no longer recommended. Many of these systems are mission-critical or difficult to modify, leading organizations to make conscious risk acceptance decisions and continue using weakened cryptography to preserve functionality. Cryptographic agility in real-world deployments must therefore account for such constraints.

Shifting Cryptography Outside the System

To address the limitations of hardware and legacy systems, the concept of shifting cryptography outside the system is introduced. Instead of modifying the legacy system itself, cryptographic protections are applied outside the system boundary, allowing organizations to improve security without altering the original hardware or software.

NIST describes this approach using concepts such as cryptographic gateways or “bump-in-the-wire” solutions, which intercept data flowing into and out of a legacy system. These gateways perform modern cryptographic operations such as encryption, authentication, or key establishment on behalf of the legacy system. From the system’s perspective, its original interfaces remain unchanged, while the surrounding environment enforces updated cryptographic protections.

This approach allows organizations to:

- Extend the operational life of legacy systems

- Introduce stronger cryptography without invasive changes

- Manage cryptographic transitions incrementally

However, there are some hardware platforms that support limited forms of agility, such as reprogrammable logic or modular cryptographic components. While not universally applicable, these designs demonstrate that hardware agility is possible when considered early in system design.

Ultimately, cryptographic agility is not about eliminating constraints, but about designing strategies that work within them. Where protocols and software offer flexibility, hardware and legacy systems require architectural workarounds and strategic planning. By combining external cryptographic controls with organizational preparedness, organizations can maintain security even when direct cryptographic updates are impractical.

The Full Crypto Agility Strategy

Crypto agility is not a fixed endpoint but a continuous lifecycle. Protocols enable change, implementations make it feasible, strategy makes it manageable, and hardware-aware approaches make it realistic in the presence of legacy constraints. These layers are interdependent. i.e., failure at any one undermines the others and reintroduces cryptographic risk.

Sustaining crypto agility therefore requires ongoing coordination across protocol design, implementation practices, governance strategy, and hardware constraints, with continuous reassessment as threat models, standards, and computational capabilities evolve.

The Role of CBOM in Enabling Strategic Crypto Agility

A Cryptographic Bill of Materials (CBOM) directly strengthens this implementation phase by providing structured, actionable visibility into cryptographic usage across assets. While NIST emphasizes the need for cryptographic inventory and prioritization, a CBOM operationalizes these concepts by explicitly documenting where and how cryptography is used, enabling impact analysis of algorithm changes, dependency mapping across applications and infrastructure, and informed transition planning for algorithm migration, key rotation, or deprecation.

This level of detail allows organizations to assess blast radius, sequence remediation activities, and execute cryptographic transitions in a controlled and auditable manner.

- Cryptographic algorithms and modes in use

- Key sizes and parameters

- Protocol dependencies

- Cryptographic libraries and modules

- Hardware-backed cryptographic components

- Lifecycle attributes, including validity periods, key and certificate rotation policies, revocation mechanisms

By maintaining a CBOM, organizations can quickly identify which assets are affected when cryptographic policies change or when algorithms are deprecated. This allows teams to determine whether an asset can be smoothly migrated or whether compensatory controls are required. CBOM data can also be integrated with automated enterprise management tools, enabling continuous assessment rather than one-time discovery.

In the context of zero-trust architectures, a CBOM helps identify where cryptographic enforcement must be strengthened externally, ensuring that legacy or non-agile components are surrounded by modern security controls. As a result, CBOMs bridge the gap between cryptographic strategy and execution, turning abstract planning into repeatable, auditable action.

How can Encryption Consulting Help?

Our Encryption Consulting CBOM Secure tool plays a key role in helping organizations prepare. Instead of dealing with spreadsheets, manual OpenSSL outputs, or scattered configuration files, our CBOM tool gives a clear view of crypto usage across environments. It shows which algorithms are in use, what needs to change for post-quantum security, and whether systems meet security goals. For organizations getting ready for board meetings, architecture choices, or compliance planning, our tool provides clarity and speed.

Our CBOM Secure is more than just a reporting tool; it also speeds up the process. It automates crypto inventories, checks TLS configurations, validates algorithms, and aligns policies, so teams can move from discovery to action without guessing. In future releases, Encryption Consulting plans to add automated fixes, cloud-native integrations, and policy enforcement to keep configurations in line with security standards at all times.

Now is a great time to get started: test PQC in a staging environment, map your current crypto usage, and begin creating internal policies. If your organization wants to pilot quantum-safe projects, give feedback, or help shape new features, we at Encryption Consulting encourage you to reach out. The earlier the teams start, the easier the long-term work will be.

If your organization needs support, structured assessments, or a guided approach, Encryption Consulting is ready to help with workshops, advice, and deployment assistance using our CBOM Secure. Contacting us today lets you move into the transition with confidence, instead of waiting until you are forced to change.

Conclusion

Taken together, the NIST CSWP 39 presents cryptographic agility as a layered, end-to-end capability. Protocols must permit change, implementations must support it, organizations must plan for it, and architectures must accommodate real-world constraints.

Cryptographic agility is not about predicting which algorithms will fail next, but about ensuring that failure does not translate into crisis. By embedding agility across technical, operational, and strategic layers, organizations can treat cryptographic evolution as a managed, continuous process, preserving trust, security, and resilience in an environment where change is inevitable.

By leveraging automated enterprise management tools, adopting zero-trust-aligned controls where necessary, and maintaining a Cryptographic Bill of Materials (CBOM), organizations transform cryptographic change from an emergency response into a governed, repeatable process. This strategic readiness ensures that cryptographic policies remain enforceable across both agile and constrained environments, allowing organizations to sustain security, resilience, and trust as cryptographic requirements inevitably evolve.

- Introduction

- Designing Security Protocols for Cryptographic Change

- Algorithm Negotiation, Transitions, and Security Trade-offs

- From Protocol Agility to Implementation Reality

- Abstraction, Modularity, and Configuration

- Treating Cryptographic Risk as an Organizational Responsibility

- The Crypto Agility Strategic Plan

- Cryptographic Rigidity in Hardware and Long-Lived Systems

- Shifting Cryptography Outside the System

- The Full Crypto Agility Strategy

- How can Encryption Consulting Help?

- Conclusion