- Why is Traditional Security Failing?

- Why is Your Current Encryption Already in Trouble?

- Visibility is the Key to PQC Migration

- Understanding the Urgency

- Why is Hybrid Cryptography Your Friend Right Now?

- Understanding Cryptographic Agility

- Executing the Migration

- Governance and Ownership

- How can Encryption Consulting Help?

- Conclusion

Why is Traditional Security Failing?

What if the strongest lock your organization relies on today is not being broken, but quietly becoming irrelevant?

For decades, modern cybersecurity has depended on cryptography as the foundational mechanism for digital trust. Organizations built systems on mathematically hard problems, vast key spaces, and the assumption that classical computers could never feasibly brute-force protected data. Under those constraints, encrypted information was effectively secure for ages.

Quantum computing changes that assumption entirely. A cryptographically relevant quantum computer (CRQC) does not attack encryption through incremental speed improvements; it invalidates the hardness assumptions underlying much of today’s public-key cryptography. In practical terms, this represents a structural failure of the lock itself and not merely a stronger attempt to pick it.

More importantly, the risk timeline is already in motion. Adversaries are actively executing Harvest Now, Decrypt Later (HNDL) strategies, i.e., collecting encrypted traffic and sensitive data today with the expectation of decrypting it once quantum capabilities mature. For organizations responsible for long-lived secrets, regulated data, intellectual property, or national-scale infrastructure, the quantum threat is therefore present-day, not future-dated.

This reality forces a broader reconsideration of cryptography’s role in enterprise security.

Cryptography can no longer be viewed solely as a mechanism for confidentiality, authentication, and integrity. In modern digital systems, it underpins a wider trust fabric that directly affects operational resilience, compliance posture, ecosystem trust, and long-term data survivability.

Practically, this expanded responsibility spans:

- Confidentiality: protecting information from unauthorized disclosure.

- Integrity: ensuring data remains accurate and untampered.

- Availability: sustaining reliable and timely access to systems and services.

- Authentication: verifying identities of users, devices, and workloads.

- Validation: enforcing correctness of inputs, protocols, and processing logic.

- Non-repudiation: providing irrefutable proof of origin and action.

Together, these properties define the modern assurance boundary enforced by cryptography across enterprises, supply chains, and critical infrastructures.

This blog is to understand that cryptographic modernization is no longer a distant planning exercise. It is immediate engineering, governance, and risk-management responsibility.

The sections that follow examine what is genuinely at risk in the quantum transition, what post-quantum cryptography (PQC) realistically delivers, and how organizations can execute a phased, operationally safe migration without destabilizing the systems they depend on.

Why is Your Current Encryption Already in Trouble?

Most of the encryption securing today’s internet, whether it’s a TLS handshake in your browser, a VPN tunnel, an SSH session, or a digitally signed software update, depends on two hard mathematical problems: factoring very large integers and solving discrete logarithms.

Algorithms like RSA, ECDSA, and ECDH are built directly on these problems. Their security comes from a simple idea that while it’s easy to generate keys using these mathematical operations, reversing them without the private key would require an impractical amount of computational effort on classical computers.

For decades, they have been the backbone of public-key cryptography, enabling secure key exchange, authentication, and digital signatures on a global scale.

Today, quantum hardware is advancing fast enough that governments, intelligence agencies, and standards bodies treat CRQC as a serious planning assumption.

There are two timelines:

- Timeline One: HNDL, wherein an adversary who intercepts your encrypted traffic today doesn’t need to decrypt it today. They store the ciphertext and wait. If any data you’re protecting today has a confidentiality requirement that extends beyond the next ten to fifteen years, such as medical records, financial contracts, intellectual property, or state secrets, it is already at risk.

- Timeline Two: The authentication risk, wherein digital signatures, PKI, and certificate chains face a different kind of threat. Adversaries can’t retroactively forge a signature from yesterday. But the day a capable quantum computer exists, they can forge new signatures, impersonate trusted systems, and collapse the trust models that code signing, software distribution, and authentication infrastructure are built on.

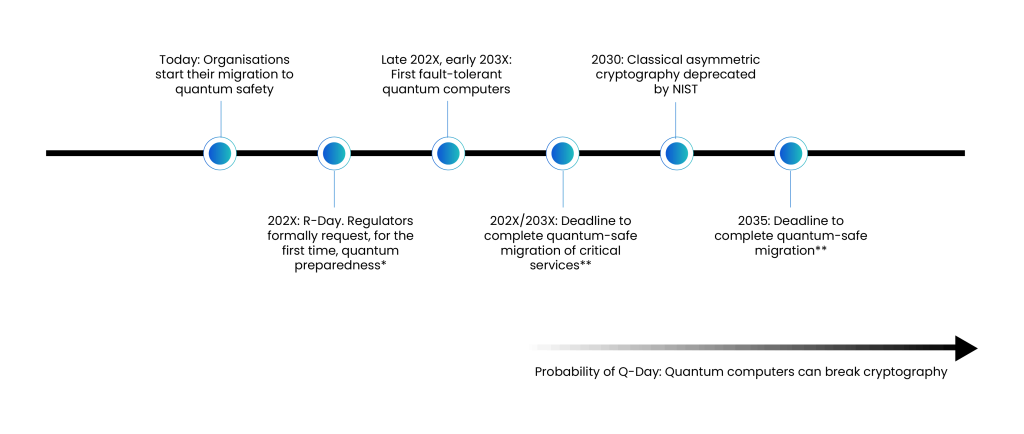

The following figure (Figure 1) illustrates why the risk isn’t theoretical anymore, but it’s a race against time. On the left, organizations are beginning their migration to quantum-safe cryptography today, not because a CRQC exists yet, but because the transition itself will take years. In the late 2020s or early 2030s, the first fault-tolerant quantum computers are expected to emerge. Once that threshold is crossed, algorithms like RSA and ECC could be broken using Shor’s algorithm, rendering classical asymmetric cryptography insecure.

Regulators are anticipated to formalize quantum preparedness requirements (“R-Day”), followed by deadlines to migrate critical services, and eventually a broader deprecation of classical asymmetric cryptography. By 2035, full migration may no longer be optional. The timeline also reflects the growing probability of “Q-Day”, the moment quantum systems can practically break today’s cryptography.

The key takeaway is that encryption risk is not just about when quantum computers arrive, but about how long your data needs to remain secure. If your confidentiality window extends a decade or more, the migration clock has already started.

Visibility is the Key to PQC Migration

This is the process most organizations skip in their eagerness to appear action-oriented, and it’s also the step that makes everything else possible. Before you write a single line of migration code, you need a complete picture of your cryptographic landscape.

Most organizations are surprised by how much cryptography they actually run. It’s embedded in TLS certificates, VPN configurations, SSH keys, code-signing pipelines, database encryption, mobile applications, APIs, firmware images, hardware security modules, and third-party software, which they don’t fully control.

Attempting to migrate without a full inventory is like trying to replace the plumbing in a building without the blueprints. Therefore, gaining comprehensive visibility into the organization’s cryptographic landscape is essential. This visibility can be structured and analyzed across multiple infrastructure domains, organized layer by layer, as follows:

Network Perimeter and Transport Layer

Inventory every TLS certificate across both public-facing and internal services. Identify all VPN gateways and load balancers that terminate TLS sessions. The objective at this layer is to determine which cipher suites rely on RSA or ECDH-based key exchange, as these will require replacement or hybridization during migration.

Public Key Infrastructure (PKI)

Map your entire trust hierarchy: root and intermediate certificate authorities, certificate issuance workflows, code-signing infrastructure, and document-signing processes. This layer underpins organizational trust and identity. Any migration here must be executed with exceptional precision, as mistakes can cascade across the entire enterprise.

Application-layer Cryptography

Assess database encryption mechanisms (both column-level and tablespace-level), key management systems (KMS), and hardware security modules (HSMs). Review APIs using JWT or OAuth tokens signed with RSA. Examine mobile applications performing local key generation. Most critically, identify custom cryptographic implementations embedded within application code. These represent the highest risk, as they are often poorly documented and inconsistently maintained.

Embedded Systems and OT/IoT

Evaluate firmware-signing mechanisms, authentication schemes within operational technology (OT) environments, and industrial control systems. These systems are typically the most difficult to migrate due to constrained hardware resources and extended refresh cycles. They should be flagged early. Procurement planning for PQC-capable hardware replacements must begin now, even if deployment is several years away.

Third-Party and Cloud Dependencies

Catalog SaaS platforms processing sensitive data, cloud provider key management services, such as Amazon Web Services KMS, Microsoft Azure Key Vault, or Google Cloud KMS, and software supply chain tooling such as GitHub. For each dependency, establish vendor roadmaps for PQC support. Identify the contractual, regulatory, or procurement levers available to influence vendor transition timelines.

For each asset you catalog, record three things: the cryptographic algorithm in use, the expected lifespan of the data or system it protects, and who owns and can actually update it. That third point is often where migrations stall. Cryptographic debt is spread across team silos, and no single team has end-to-end visibility or the authority to drive change alone.

Understanding the Urgency

Not every organization faces the same urgency, and knowing where you fall shapes everything about how aggressively you need to move.

Urgent Adopters

Handle data that needs to remain confidential for more than ten years, operate critical infrastructure or long-lived systems that are hard to upgrade, or manage data that adversaries would be highly motivated to collect today and decrypt later. Intelligence agencies, defense contractors, healthcare systems storing lifetime patient records, financial institutions, and telecommunications operators handling core infrastructure typically fall here. If this is you, migration planning should be underway now, not next fiscal year.

Regular Adopters

These cover most commercial enterprises. Data sensitivity is real but bounded, and systems are updated on reasonable cycles. You have time to plan carefully, but you don’t have unlimited time. The right move is to begin your inventory, start building cryptographic agility into every new system you build or buy, and create a phased migration roadmap with clear milestones and accountable owners.

Cryptography Vendors and Platform Builders

If you build cryptographic libraries, security products, networking equipment, or cloud platforms, your obligations are arguably more urgent than those of urgent adopters, because your decisions cascade downstream to every customer. Your roadmap needs to include PQC support in APIs, documentation, and default configurations. When your customers ask about your PQC timeline, you should already have a specific answer.

Why is Hybrid Cryptography Your Friend Right Now?

You almost certainly cannot migrate your entire cryptographic infrastructure overnight, as systems must remain operational. Backward compatibility with partners and customers must be preserved. New algorithms need thorough testing before they carry production workloads. This is why hybrid cryptography is the recommended bridge strategy from virtually every major standards body, including NIST, ETSI, etc.

The principle is straightforward. Instead of replacing ECDH key exchange with ML-KEM entirely, you run both simultaneously and combine the resulting shared secrets. This is because an attacker needs to break both to compromise the session. This means your security doesn’t revert if a flaw is discovered in a new PQC algorithm, then you can retain classical protection while simultaneously gaining quantum resistance.

For instance, in practice, a hybrid TLS key exchange would work like the following, wherein the client and server perform two key establishment operations, one classical (say, X25519) and one post-quantum (ML-KEM-768). The resulting key material is combined using a key derivation function into a single session key. Both components must be compromised to recover that key. The overhead is measurable but acceptable for most use cases.

Therefore, hybrid schemes provide security even if the PQC component is later found to be broken and also protect against quantum attacks even if the classical component is compromised, making them the wise choice during this transition period.

The hybrid phase is a transition strategy, not a permanent destination. As confidence in PQC algorithms grows through real-world deployment, sustained academic scrutiny, and the passage of time without breaks, organizations can gradually phase out the classical component. That transition should be event-driven, triggered by algorithm maturity milestones, standards body depreciation schedules, and internal audit results rather than by arbitrary timelines.

Understanding Cryptographic Agility

One of the most important, yet often overlooked, realities of cryptography is that no algorithm remains secure forever. What is considered strong today may become insufficient tomorrow due to advances in cryptanalysis, computing power, or new attack techniques. This has happened repeatedly in the past as algorithms once trusted for decades were eventually deprecated or replaced when weaknesses emerged. Examples include SHA-1, which was widely trusted before being deprecated; DES, which became insecure faster than expected; and MD5, which remained in production long after weaknesses were known.

The transition to PQC is not a one-time event, but part of a broader pattern of continuous cryptographic evolution. This is why cryptographic agility is essential.

Cryptographic agility is the ability of an organization to update, replace, or strengthen cryptographic algorithms, key sizes, and protocols without disrupting systems or requiring major architectural changes. It ensures that cryptographic transitions can be performed in a controlled, predictable, and low-risk manner. Without agility, even routine cryptographic updates become complex and error-prone, often requiring code changes, system downtime, and coordination across multiple teams and vendors.

In agile systems, cryptographic mechanisms are not hardcoded into application logic. Instead, they are abstracted and managed through centralized policies, configuration layers, and key management systems. Applications rely on these policies rather than embedding specific algorithms such as RSA or ECDSA directly into their implementation. This allows organizations to update algorithms in one place and have those changes propagate across systems automatically. Key management systems and hardware security modules support multiple algorithm families simultaneously, certificates include machine-readable algorithm identifiers, and deployment pipelines enforce compliance with current cryptographic standards.

Cryptographic agility is especially important during the transition to PQC. Organizations will need to support both classical and post-quantum algorithms at the same time, migrate in phases, and adapt as standards continue to mature. It also plays a key role in vendor and infrastructure decisions. Systems that require firmware replacement, hardware upgrades, or vendor intervention to change cryptographic algorithms create long-term operational and security risk. In contrast, systems designed with agility allow cryptographic components to evolve without disrupting business operations.

Ultimately, cryptographic agility transforms cryptography from a fixed implementation into a manageable, adaptable security layer. It ensures that organizations can respond to new threats, adopt stronger algorithms, and maintain long-term security without repeated large-scale migrations.

Executing the Migration

With an inventory in hand, a clear understanding of your urgency level, and a commitment to embedding cryptographic agility into everything you build and buy going forward, you’re ready to actually execute. The central principle is not to introduce new vulnerabilities while trying to remove old ones. Rushed migrations and poorly understood hybrid implementations can create security regressions worse than the original problem.

Phase 1: Prioritize by risk exposure

Not everything migrates at the same time. Use your inventory data to rank assets by the intersection of two factors: the sensitivity and longevity of the data they protect, and the feasibility of migration given technical and operational constraints.

Assets protecting data with a fifteen-plus-year confidentiality requirement need migration first. Embedded systems that require full hardware replacement can migrate later, but procurement decisions must happen now.

Phase 2: Start with transport layer security

TLS is often the most tractable starting point. Major TLS libraries such as OpenSSL, BoringSSL, and liboqs already have PQC support. Hybrid key exchange for TLS can be deployed in a relatively contained way, tested thoroughly in staging, and rolled out gradually using feature flags. The performance overhead is typically acceptable for web and API traffic.

Phase 3: Migrate PKI and code signing

Certificate infrastructure changes are high-risk because they affect trust chains system-wide. A poorly executed PKI migration can break authentication for thousands of users overnight.

The recommended approach is to establish a parallel PQC certificate hierarchy, validate it thoroughly in a non-production environment, and gradually shift workloads to the new hierarchy while keeping the classical hierarchy as a fallback. Dual-certificate issuance, i.e., issuing both classical and PQC certificates for the same entity during the transition, is a viable pattern already being used by early adopters.

Phase 4: Application cryptography and data at rest

Migrating database encryption and application-level cryptography requires careful coordination with data engineering teams. For data already encrypted with classical algorithms, organizations have a choice of re-encrypting at rest with a PQC scheme (expensive but complete) or accepting that historical ciphertext remains classically encrypted while ensuring all new data uses PQC.

For most organizations, the realistic answer is new-data-forward encryption with a planned, phased re-encryption of the most sensitive existing records.

Phase 5: Validate, test, and monitor continuously

Every cryptographic change in production needs automated validation, such as cipher suite scanning, certificate chain verification, and key material auditing. Building these checks into your security monitoring pipeline is essential so that regressions such as accidental algorithm downgrades, expired PQC certificates, and misconfigured hybrid implementations are caught automatically rather than discovered during an incident.

Governance and Ownership

Technical challenges are only part of the picture. Many PQC migration efforts stall not because of algorithmic complexity but because of organizational friction. Examples include unclear ownership, competing priorities, insufficient budget, and the challenge of coordinating teams.

The recommended structure is a dedicated PQC Steering Team with representation from each stakeholder function and explicit executive sponsorship. This team owns the inventory, sets migration priorities, coordinates with vendors, tracks standards evolution, and provides regular progress updates to leadership. Cryptographic migration needs someone whose job it is to push it forward, not a task that lives at the bottom of everyone else’s backlog.

Equally important is internal cryptographic literacy. Not every engineer needs to understand lattice problems in depth. But every developer writing code that touches authentication, encryption, or key management should understand the basic threat model and know which libraries and patterns are approved under your cryptographic policy. Developer education, updated secure coding guidelines, and cryptographic pattern libraries are practical solutions.

NIST has recognized that, historically, it has taken 10 to 20 years to fully implement cryptographic migrations, and cryptographers worldwide have emphasized that transitioning to a quantum-safe infrastructure is a far more complex challenge than previous migrations, as it requires not just algorithm swaps, but rebuilding key management solutions, communication protocols, applications, and systems that incorporate cryptography. That complex argument is not a reason to wait; it’s a reason to start earlier.

How can Encryption Consulting Help?

If you are wondering where and how to begin your post-quantum journey, Encryption Consulting is here to support you. You can count on us as your trusted partner, and we will guide you through every step with clarity, confidence, and real-world expertise.

Cryptographic Discovery and Inventory

This is the foundational phase where we build visibility into your existing cryptographic infrastructure. We identify which systems are at risk from quantum threats and assess how ready your current setup is, including your PKI, Hardware Security Modules (HSMs), and applications. The goal is to identify what cryptographic assets exist, where they are used, and how critical they are. Comprehensive scanning of certificates, cryptographic keys, algorithms, libraries, and protocols across your IT environment, including endpoints, applications, APIs, network devices, databases, and embedded systems.

Identification of all systems (on-prem, cloud, hybrid) utilizing cryptography, such as authentication servers, HSMs, load balancers, VPNs, and more. Gathering key metadata like algorithm types, key sizes, expiration dates, issuance sources, and certificate chains. Building a detailed inventory database of all cryptographic components to serve as the baseline for risk assessment and planning.

PQC Assessment

Once visibility is established, we conduct interviews with key stakeholders to assess the cryptographic landscape for quantum vulnerability and evaluate how prepared your environment is for PQC transition. Analyzing cryptographic elements for exposure to quantum threats, particularly those relying on RSA, ECC, and other soon-to-be-broken algorithms. Reviewing how PKI and HSMs are configured, and whether they support post-quantum algorithm integration. Analyzing applications for hardcoded cryptographic dependencies and identifying those requiring refactoring. Delivering a detailed report with an inventory of vulnerable cryptographic assets, risk severity ratings, and prioritization for migration.

PQC Strategy & Roadmap

With risks identified, we work with you to develop a custom, phased migration strategy that aligns with your business, technical, and regulatory requirements. Creating a tailored PQC adoption strategy that reflects your risk appetite, industry best practices, and future-proofing needs. Designing systems and workflows to support easy switching of cryptographic algorithms as standards evolve. Updating security policies, key management procedures, and internal compliance rules to align with NIST and NSA (CNSA 2.0) recommendations. Crafting a step-by-step migration roadmap with short-, medium-, and long-term goals, broken down into manageable phases such as pilot, hybrid deployment, and full implementation.

Vendor Evaluation & Proof of Concept

At this stage, we help you identify and test the right tools, technologies, and partners that can support your post-quantum goals. Helping you define technical and business requirements for RFIs/RFPs, including algorithm support, integration compatibility, performance, and vendor maturity. Identifying top vendors offering PQC-capable PKI, key management, and cryptographic solutions. Running PoC tests in isolated environments to evaluate performance, ease of integration, and overall fit for your use cases. Delivering a vendor comparison matrix and recommendation report based on real-world PoC findings.

Pilot Testing & Scaling

Before full implementation, we validate everything through controlled pilots to ensure real-world viability and minimize business disruption. Testing the new cryptographic models in a sandbox or non-production environment, typically for one or two applications. Validating interoperability with existing systems, third-party dependencies, and legacy components. Gathering feedback from IT teams, security architects, and business units to fine-tune the plan. Once everything is tested successfully, we support a smooth, scalable rollout, replacing legacy cryptographic algorithms step by step, minimizing disruption, and ensuring systems remain secure and compliant. We continue to monitor performance and provide ongoing optimization to keep your quantum defense strong, efficient, and future-ready.

PQC Implementation

Once the plan is in place, it is time to put it into action. This is the final stage where we execute the full-scale migration, integrating PQC into your live environment while ensuring compliance and continuity. Implementing hybrid models that combine classical and quantum-safe algorithms to maintain backward compatibility during transition. Rolling out PQC support across your PKI, applications, infrastructure, cloud services, and APIs. Providing hands-on training for your teams along with detailed technical documentation for ongoing maintenance. Setting up monitoring systems and lifecycle management processes to track cryptographic health, detect anomalies, and support future upgrades.

Transitioning to quantum-safe cryptography is a big step, but you do not have to take it alone. With Encryption Consulting by your side, you will have the right guidance and expertise needed to build a resilient, future-ready security posture.

Reach out to us at info@encryptionconsulting.com and let us build a customized roadmap that aligns with your organization’s specific needs.

Conclusion

The post-quantum cryptographic transition is unlike most technology migrations. It doesn’t have a single hard deadline. It doesn’t announce itself with a system outage or a breach notification. It rewards early movers with control, optionality, and the ability to do this work carefully, and it punishes late movers with rushed, expensive, high-risk execution under time pressure.

The good news is that the foundations are in place. Three practices underpin a resilient cryptographic framework going into the quantum era, i.e., cryptographic inventory, cryptographic agility, and cryptographic defence-in-depth. None of these requires waiting. All of them can start today, with existing tools and existing teams.

The NIST finalized standards, i.e., ML-KEM, ML-DSA, and SLH-DSA, are ready. Major open-source libraries, cloud providers, and hardware vendors are moving toward PQC support at a pace. The infrastructure for this migration exists. What most organizations are still missing is the organizational will to treat it as a first-order priority.

Start with an inventory. Know what cryptography you’re using, what data it protects, how long that data needs to stay secure, and who owns each system. From there, build your roadmap based on real risk exposure. Invest in cryptographic agility in everything you build or buy going forward. Execute the migration in phases that balance speed with rigor. And get a named owner in the room whose job it is to make sure it actually happens.

The best time to start your PQC migration was five years ago. The second-best time is right now.

- Why is Traditional Security Failing?

- Why is Your Current Encryption Already in Trouble?

- Visibility is the Key to PQC Migration

- Understanding the Urgency

- Why is Hybrid Cryptography Your Friend Right Now?

- Understanding Cryptographic Agility

- Executing the Migration

- Governance and Ownership

- How can Encryption Consulting Help?

- Conclusion